In this section, we present and elucidate the architecture and the training process of Shallow-ProtoPNet. This section also contains information about the dataset used in this study.

Shallow-ProtoPNet architecture

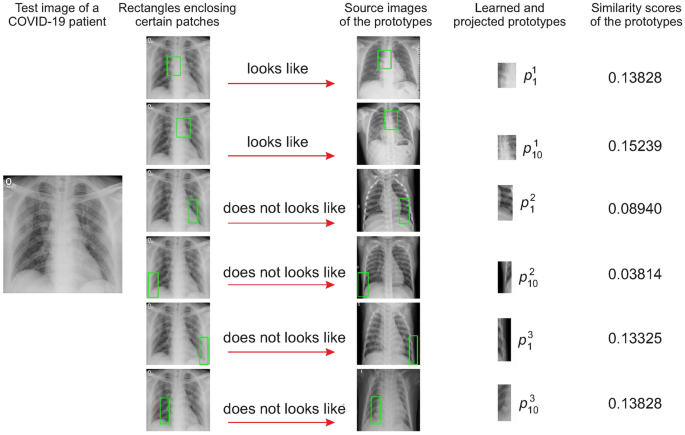

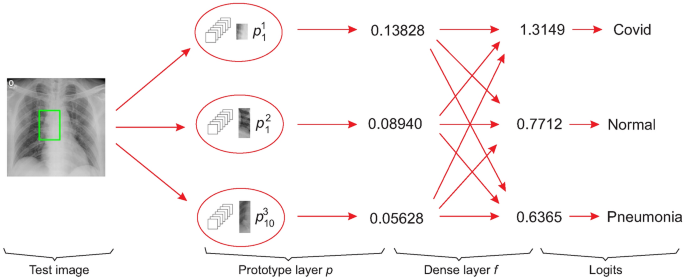

As shown in Fig. 2, Shallow-ProtoPNet consists of a generalized convolutional layer45 p of prototypes and a fully-connected layer f. The layer f has no bias, but it has a weight matrix \(f_m\). Therefore, the input layer is followed by the layers p, f, and logits. Hence, the architecture of Shallow-ProtoPNet is similar to the architecture of ProtoPNet as described in “Working principal of ProtoPNet” section, but Shallow-ProtoPNet does not use the negative reasoning process as recommended by 33, Theorem 3.1. Note that, layer p is an interpretable as well as a transparent layer, whereas layer f is an indispensable fully-connected layer. The layer p is a transparent layer, because we can even manually see the prototypes (which are used to make predictions) along with their similarity scores for a given input image, see Fig. 1.

To classify an input image, the model finds the Euclidean distance between each latent patch of the input image and the learned prototypes of images from different classes. The maximum of the inverted distances between a prototype and the patches of the input image is called the similarity score of the prototype. Note that the smaller the distance, the larger the reciprocal, and there will be only one similarity score for each prototype. Then the vector of similarity scores is multiplied with the weight matrix associated with the dense layer f to obtain logitis, which are normalized using Softmax to determine the class of the input image.

Prototypes are similar to certain patches of training images that give very high similarity scores. The main difference of Shallow-ProtoPNet from the other ProtoPNet models is that it does not use any black-box part as its baseline, whereas each of the other ProtoPNet models is constructed over the pretrained black-box base models. For comparison purposes, as mentioned in “Dataset” section, we used the same resized images for all the models.

ProtoPNet model series does not use fractional pooling layers (before the generalized convolution layer p) that can deal with variable input image size, but it uses the convolutional layers, specifically convolutional layers of ResNet, DenseNet, and VGG which require a fixed input image size. This is evidenced in the work of Gautam et al.46, who used the ResNet-34 model, in the work of Wei et al.47 who applied the ResNet-152, and finally in the work of Ukai et al.48.

Training of shallow-ProtoPNet

Let \(x_i\) and \(y_i\) be the training images and their labels, for \(1\le i\le n\), where n is the size of the training set. Let \(P_i\) denote the set of prototypes for class i, and let P represent the set of all prototypes. Let \(d^2\) be the square of the Euclidean distance d between the tensors: prototypes and patches of x. Our goal is to solve the following optimization problem:

$$\begin{aligned} {\min _{P}\dfrac{1}{n}\sum \limits ^n_{i=1}\text{ CE }(f\circ p(x_i), y_i)+\lambda * \text{ CC },} \end{aligned}$$

(1)

where CC is given by

$$\begin{aligned} \text{ CC } = \dfrac{1}{n}\sum \limits ^n_{i=1}\min _{j: p_j\in P_i}\min _{\mathcal {X}\in \small \text{ patches }\left( x_i\right) }d^2(\mathcal {X}, p_j). \end{aligned}$$

(2)

Let x be an input image of the shape \(3\times 224 \times 224\) and \(p^i_j\) be any prototype of the \(3 \times h\times w\), where \(1< h,~ w < 224\), that is, h and w are not simultaneously equal to 1 or 224. However, for our experiments, we used prototypes with spatial dimensions \(70 \times 100\), and each class has 10 prototypes. Suppose \(p^1_1, \cdots , p^1_{10}\), \(p^2_1, \cdots , p^2_{10}\) and \(p^3_1, \cdots , p^3_{10}\) are prototypes for the first, second, and third classes, respectively, where \(p^i_j\) is an ith prototype of jth class.

As shown in Fig. 2, an input image x is first fed to the generalized layer p to calculate similarity scores, and then those similarity scores are connected to the logits using a dense layer f. These logits are normalized using Softmax to find the probabilities to make predictions. These predictions are compared with the y labels to calculate the cross-entropy. The object function given in Equation (1) minimizes cross-entropy over sets of prototypes P, that is, after optimization, the objective function gives the prototypes that minimize the cross-entropy.

A prototype \(p^i_j\) has the same depth as the input image x, but its spatial dimensions \(h\times w\) are smaller than the input image’s spatial dimensions \(224\times 224\). Therefore, \(p^i_j\) can be considered a patch of x. As explained in “Working principal of ProtoPNet” section, Shallow-ProtoPNet also calculates the similarity scores. The model identifies a patch on x that is the most similar (with a higher similarity score) to the prototype \(p^i_j\). That is, for each prototype, the model produces a similarity score and it identifies a similar patch on x. Note that, at this step Shallow-ProtoPNet strikingly differs from the other ProtoPNet models.

Other ProtoPNet models compare a prototype with a latent patch of x instead of a patch of x, where a latent patch is a part of the output of a baseline of the other ProtoPNet models. Therefore, Shallow-ProtoPNet does not lose any information between x and p due to the convolutional layers or pooling layers of any baseline. In Fig. 2, the similarity scores 0.13828, 0.08940, and 0.05628 for \(p^1_1\), \(p^2_1\) and \(p^3_{10}\) are given, but the similarity score for each prototype is calculated. Let S be the matrix of similarity scores. The logits are obtained by multiplying S with \(f_m\), that is, the logits are the weighted sums of similarity scores. The logits 1.3149, 0.7712, and 0.6365 for the three classes are given in Fig. 2. The patches in the source images that are most similar to the prototypes \(p^1_1, p^1_{10}\), \(p^2_1, p^2_{10}\), \(p^3_1\) and \(p^3_{10}\) are enclosed by the green rectangles.

From Equation (1), we see that the lower cross entropy (CE) is necessary for better classification. To cluster prototypes around their respective classes, cluster cost (CC) must be smaller, see Equation (2). Since the number of classes (Coronavirus disease (COVID-19)49, Normal, and Pneumonia) is small, we only use the positive reasoning process similar to Quasi-ProtoPNet, see33, Theorem 3.1. For a class i, \(f^{(c,j)}_m = 0\) and \(f^{(i,j)}_m = 1\), where \(p_c \not \in P_i\), \(p_j \in P_i\), and \(f^{(r,s)}_m\) is the (r, s) entry of the weight matrix \(f_m\). Therefore, a prototype has a positive connection with its correct class and zero connection with its incorrect classes. The hyperparameter \(\lambda\) is set equal to 0.9. The optimizer stochastic gradient descent50 is used to optimize the parameters.

Visualization of prototypes

For a prototype and a training image x, Shallow-ProtoPNet identifies a patch on x that yields a similarity score at least at the 95th percentile of all the similarity scores, and the identified patch is projected to visualize the prototype. Also, each prototype is updated with the most similar patch of x, that is, the value of each prototype is replaced with the value of the most similar patch of x. Since these projected patches are similar to the prototypes, we also call the projected patches prototypes, see Fig. 1.

Working principal of ProtoPNet

The architecture of ProtoPNet (https://github.com/cfchen-duke/ProtoPNet) consists of the convolutional layers of a baseline, such as VGG-16, followed by some additional \(1\times 1\) convolutional layers for fine-tuning. Then, it has a generalized51 convolutional layer of prototypical parts (prototypes), and a fully-connected layer that connects prototypical parts to logits. Each prototypical part is a tensor with spatial dimensions \(1\times 1\) and a depth equal to the depth of the convolutional layers. Therefore, the layer of prototypical parts is a vector of tensors.

ProtoPNet compares learned prototypical parts with latent patches of an input image to make the classification.

The ProtoPNet uses the similarity scores in the classification process since Shallow-ProtoPNet is a sub-class of the ProtoPNet, this model also follows this approach. In Fig. 1 an example of the classification process is presented considering the similarity scores when the Shallow-ProtoPNet is applied.

The similarity score of a prototype with an input image is the maximum of the reciprocals of the Euclidean distances between the prototype and each latent patch of the input image. ProtoPNet applies log function as a non-linearity on similarity scores. The weighted sums of similarity scores give logits. At the fully connected layer, a positive weight of 1 is assigned between the similarity scores of prototypes and their correct classes, and a negative weight of \(-0.5\) is assigned between the similarity scores of prototypes and their incorrect classes.

The negative weights are assigned to scatter prototypes away from the classes they do not belong, that is, to incorporate the negative reasoning process. On the other hand, the positive weights are assigned to cluster the prototypes around their correct classes, that is, to incorporate the positive reasoning process. Softmax is used to normalize the logits. Being closely related to ProtoPNet, our model Shallow-ProtoPNet functions similar to ProtoPNet.

Novelty of Shallow-ProtoPNet

The novelty of our model is that it is not only an interpretable model but also a transparent model. Shallow-ProtoPNet uses similarity between prototypes and patches of training images, where the classes of training images are known. Therefore, Shallow-ProtoPNet is an interpretable model because it tells that a classified image contains patches more similar to the patches of images of the class, see Fig. 1. Our model is transparent because it does not use any black-box model as a baseline, see Fig. 2.

The other ProtoPNet models, on the other hand, rely on pretrained black-box models as their baselines, so they are not transparent. Also, our model does not apply any non-linearity to similarity scores, unlike the other ProtoPNet models. Additionally, our model only uses the positive reasoning process as recommended by33, Theorem 3.1, and it uses prototypes with spatial dimensions greater than 1.

The transparency or interpretability of a model becomes essential when the model is to be used for high-risk decisions. Shallow-ProtoPNet is not more accurate than the other ProtoPNet models, but it is more transparent. Besides being a step towards developing deeper and more transparent models, Shallow-ProtoPNet has the following advantages:

-

1.

The size of Shallow-ProtoPNet is much smaller than those of its related models. For example, Shallow-ProtoPNet can be as small as 50 KB, while the other ProtoPNet models have a minimum size of 28,000 KB, and some of them can even exceed 300,000 KB in size. Therefore, Shallow-ProtoPNet is suitable for use in embedded systems where memory size matters.

-

2.

Shallow-ProtoPNet performs well on a relatively small-sized dataset of X-ray images. The datasets collected by domain experts are typically small in size. In many cases, it is either too expensive or impossible to obtain abundant data, such as CT-scan or X-ray images of rare diseases or such images at the early stages of an epidemic.

Dataset

In this work, we used the dataset of chest X-ray images of COVID-19 patients52, pneumonia patients, and normal people53. The pretrained base models for the other ProtoPNet models can only accept input images of the size \(224\times 224\). Therefore, after cleaning the data, the images were resized to \(224 \times 224\). The resized images were segregated into three classes: COVID-19, Normal, and Pneumonia.

The COVID-19 class has 1248 training images and 248 test images; the Normal class has 1341 training images and 234 test images, and the Pneumonia class has 3875 training images and 390 test images. However, this is still a reasonably unbalanced dataset. Moreover, this dataset is not too small for the given depth of our model. Furthermore, it is obvious that with only two layers, if we choose a large dataset, our model cannot outperform the other ProtoPNet models in terms of accuracy.