Cohort characteristics

We analyzed data from 806 semi-critical or critical COVID-19 patients who visited the emergency room of Chungbuk National University Hospital. Among these patients, 18.8% were classified as severe according to Criterion I, and 41.1% were classified as severe according to Criterion II. According to Criterion I, the mean age of patients classified as severe and critical was 65.1 ± 19.4 and 67.1 ± 15.7 years, respectively. Under Criterion II, the mean age of severe patients was 63.2 ± 20.1 years, and that of critical patients was 68.7 ± 16.2 years. In addition, 62.7% of critical patients were male according to Criterion I, and 56.8% were male according to Criterion II. Criterion II classified relatively younger and female patients as severe cases.

Table 1 provides detailed information on 16 characteristics of COVID-19 patients included in the cohort. These characteristics include initial nursing data, diagnostic test results, and X-ray image interpretation. The Supplementary Materials (Figs. S4–S7) provide histograms for these features before and after preprocessing of data, offering a comprehensive view of the data distribution (see Fig. S3 in Supplementary Materials) and helping to identify outliers and anomalies. It also includes comparative histograms for severe and critical cases, showing feature values that highlight significant differences between severe and critical cases.

To formally assess group differences, we conducted Welch’s t-tests comparing moderate and severe groups under each severity criterion. Under Criterion I, several variables differed significantly between groups, including neutrophil count, albumin, hsCRP, protein, white blood cell (WBC) count, respiratory rate, pulse rate, diastolic blood pressure, glucose, systolic blood pressure, and platelet count (all \(p < 0.05\)). In contrast, age, body temperature, and BMI did not show statistically significant differences (\(p \ge 0.05\)). Under Criterion II, severe patients had significantly higher neutrophil percentage, hsCRP, WBC count, respiratory rate, pulse rate, and glucose level, and lower albumin, total protein, diastolic blood pressure, systolic blood pressure, and platelet count compared with moderate patients (\(p < 0.05\)). No significant differences were observed for age, body temperature, or BMI (\(p \ge 0.05\)). The results of the validation using Welch’s t-test are provided in Supplementary Materials (Tables S3, S4).

Model performance

We assessed the performance of five distinct models—Logistic Regression (LR), Random Forest (RF), Support Vector Machine (SVM), LightGBM (LGB), and XGBoost (XGB)—alongside a voting ensemble classifier model (EL) using various evaluation metrics, including accuracy, precision, recall, F1-score and ROC-AUC. Recall was particularly emphasized, as it measures the proportion of actual severe cases correctly identified, aligning with the goal of improving ward allocation for severe patients.

Table 2 presents the predictive performance of each model based on Severity Criteria I and II. Among the single models, SVM and LGB achieved the highest recall of 94.6% under Criterion I, highlighting their effectiveness in detecting severe cases. These models also performed well across other metrics. For Criterion II, LGB and XGB achieved the highest recall at 84.9%. The EL model consistently delivered exceptional results across all evaluation metrics for both severity criteria, achieving a recall of 96.2% for Criterion I and 88.2% for Criterion II. These findings suggest that ensemble learning effectively synthesizes the strengths of individual models to enhance predictive robustness.

Across all models, performance metrics—particularly ROC-AUC and recall—were consistently higher under Criterion I compared to Criterion II (see Fig. S10 in Supplementary Materials). This pattern suggests that Criterion I offers a cleaner and more discriminative target for model training, likely due to its alignment with actual ICU-level clinical interventions.

Feature importance analysis

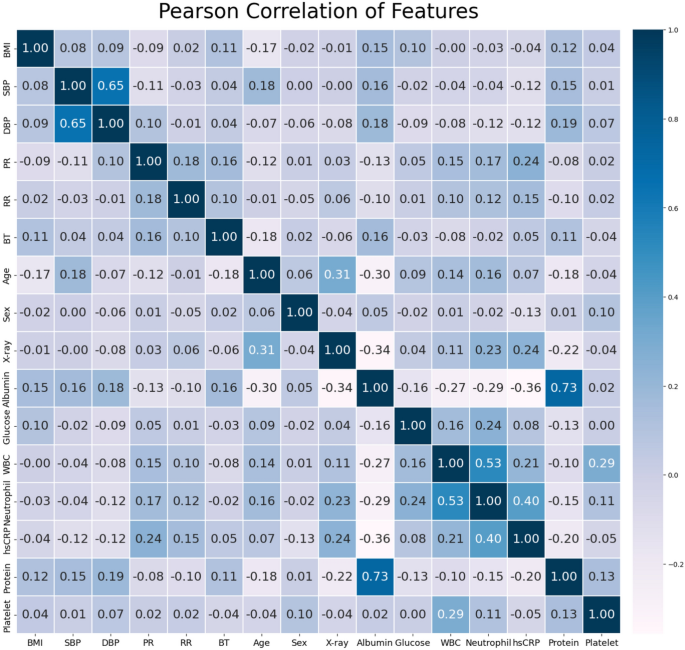

Analyzing features is crucial for developing accurate predictive models. Figure 2 shows a heatmap representing the correlation coefficient (r) between the selected features. The calculated results showed a strong correlation between SBP and DBP (\(r = 0.73\)), between albumin and protein (\(r = 0.65\)), and between white blood cells and neutrophils (\(r = 0.53\)).

Predictive performance

We conducted an extensive analysis to identify the most influential features in predicting COVID-19 severity. To do this, we employed the permutation feature importance technique, which evaluates the impact of each feature on the model’s predictions by randomly shuffling the features and measuring the resulting changes in performance33.

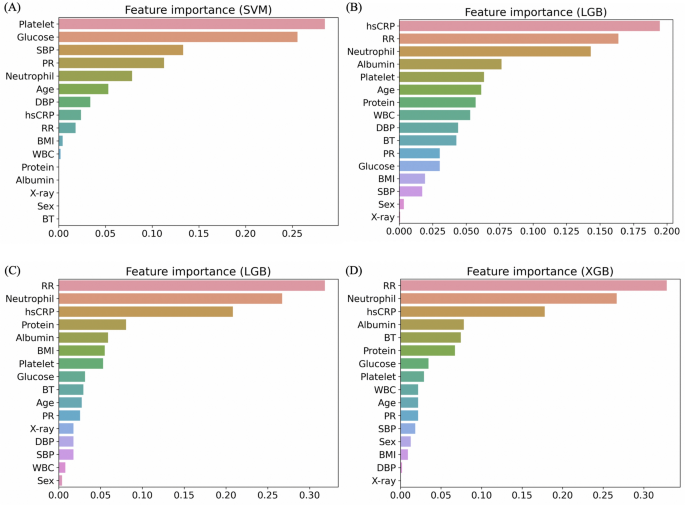

Figure 3 displays the feature importance for the top performing models under Criteria I and II. Panels (A, B) and (C, D) show the feature importance for the SVM and LGB models under Criterion I, and for the LGB and XGB models under Criterion II, respectively. For Criterion I, the SVM model highlighted the significance of features like platelets and glucose, while the LGB model prioritized hsCRP and RR. Under Criterion II, the LGB model identified neutrophils and hsCRP as key features, whereas the XGB model emphasized neutrophils and RR. These findings offer valuable insights for medical professionals to enhance the identification and treatment of severe patients. Detailed feature importance results for all models under both criteria are provided in Supplementary Materials Figs. S11 and S12.

We also employed Shapley additive explanations (SHAP) values, a technique that attributes feature importance to individual input features, to gain deeper insights into how each feature contributes to the model’s predictions33. Given that the LGB model demonstrated the best prediction performance for both Criterion I and Criterion II, we specifically computed SHAP values for the LGB model. Additional details on the SHAP values for the LGB model are provided in the Supplementary Materials (Figs. S13, S14). A mean absolute SHAP values provides a global measure of feature importance by quantifying the average magnitude of each feature’s contribution to the model’s predictions, regardless of direction. Features with higher average SHAP values are considered more influential across the dataset. This representation does not reflect whether a feature increases or decreases the predicted probability for a specific class, but rather how strongly it affects the model output overall.

Additionally, we trained a simplified decision tree classifier using the same feature set to provide an interpretable, rule-based structure for clinical use. The trees constructed under Criterion I and Criterion II are presented in the Supplementary Materials (Figs. S15, S16).

Analysis of misclassification in machine learning and initial ward allocation

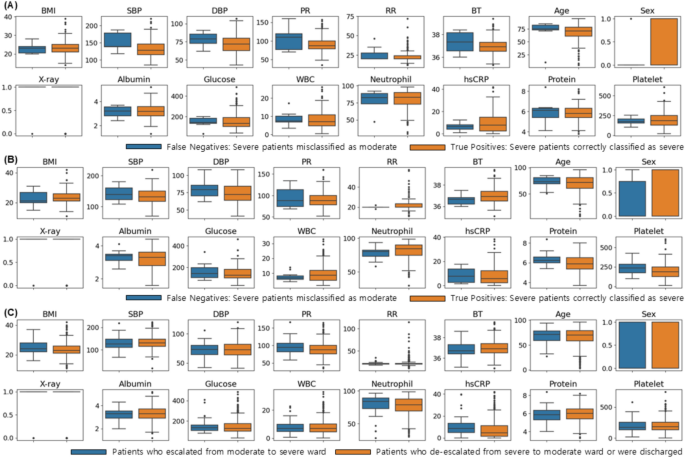

To examine misclassification in machine learning and initial ward allocation, we compared the distribution of features based on patient status, as illustrated in Fig. 4. Panels (A) and (B) depict the feature distributions of patients classified according to Criteria I and II, respectively, using the LGB model, which showed the best performance. Blue boxes represent cases where a severe condition was incorrectly classified as moderate (false negatives), while orange boxes represent cases where a severe condition was correctly identified as severe (true positives). In Fig. 4A, BMI was higher in true positive (orange) patients, while SBP and DBP showed similar distributions in both groups. Glucose, neutrophil, and hsCRP levels were also elevated in true positive patients, with other variables displaying comparable distributions. Figure 4B reveals similar trends to (A), with BMI higher in true positive patients and SBP and DBP exhibiting similar patterns. The differences in glucose, neutrophils, and hsCRP distributions were consistent with those in (A), and other variables followed similar trends.

Figure 4c illustrates the distribution of features based on actual patient ward allocation. Blue boxes represent patients who were transferred from an intermediate ward to the ICU, while orange boxes represent patients who were either transferred from the ICU to an intermediate ward or discharged from the ICU or intermediate ward. Differences in glucose, neutrophil, and hsCRP levels were observed between the two groups. Patients transferred to the ICU (blue) had higher BMI, while SBP and DBP exhibited similar distributions across both groups. Glucose, neutrophil, and hsCRP levels were also higher in patients moved to the ICU (blue), with other variables showing either similar distributions or no significant differences.

These misclassification patterns provide meaningful insights into the comparative utility of the two severity criteria. Under Criterion II, false negatives often involved patients who ultimately required advanced interventions (e.g., mechanical ventilation or ECMO), suggesting that the broader definition may obscure important clinical distinctions and reduce model specificity. By contrast, errors under Criterion I were mostly concentrated near the clinical decision boundary and associated with borderline values of physiological or inflammatory markers, such as hsCRP and neutrophil count. This indicates that Criterion I offers a more learnable and clinically coherent target for machine learning algorithms.

Analysis of confusion matrices revealed that, under Criterion II, many false negatives involved patients who eventually required intensive treatments such as mechanical ventilation or ECMO, suggesting that its broader definition may blur distinctions between moderate and severe cases. In contrast, under Criterion I, most misclassifications occurred near the severity threshold, typically involving borderline inflammatory markers like hsCRP and neutrophil counts. This indicates that Criterion I offers clearer clinical boundaries for model prediction.

In addition, we conducted a logistic regression analysis to statistically examine factors associated with false negative misclassifications. Respiratory rate (RR), serum albumin, neutrophil count, and high-sensitivity C-reactive protein (hsCRP) were identified as significant predictors (p < 0.05). These findings suggest that patients with less prominent respiratory or inflammatory responses are more likely to be incorrectly classified as moderate. Details of the regression results are provided in Supplementary Materials Table S5.

Altogether, these findings emphasize the clinical relevance of specific features in distinguishing between borderline and truly severe cases. By integrating confusion matrix analysis with feature-level interpretation, we can better understand the limitations of model predictions, improve performance, and guide the development of more robust triage and decision-support frameworks.

Box plots of features based on the patients status classified by the LGB model under (A) Criterion I and (B) Criterion II, respectively (blue: cases where severe patients were classified as moderate (false negative), orange: cases where severe patients were correctly classified as severe (true positive)); (C) Box plots of features based on actual ward allocation (blue: patients who moved from the moderate ward to the severe ward; orange: patients who moved from the severe ward to the moderate ward or were discharged from either the severe or moderate ward).